Enterprise systems rely on carefully designed access controls. HR systems, investigation tools, ticketing platforms, and knowledge bases each enforce their own permission models. But when an AI agent retrieves information across all of them at once, those boundaries can break.

In a recent Risk+ assessment of an OpenClaw deployment, AIQURIS found that the agent could surface information that no single source system would have exposed on its own. The underlying cause was not hallucination or prompt injection, but a structural weakness in the retrieval pipeline: authorisation drift.

OpenClaw and the Structural Risk in Enterprise AI Agents

OpenClaw is an autonomous AI agent platform that has rapidly attracted both developer adoption and security scrutiny. Built by Austrian developer Peter Steinberger and launched in November 2025 under the name Clawdbot, it accumulated over 140,000 GitHub stars within weeks of launch.1

The platform runs locally and connects to messaging platforms like WhatsApp, Slack, and Telegram. It can autonomously manage email, execute commands, and take actions across a user’s digital environment without relying on a hosted service. Its creator described it simply as “AI that actually does things.”

That framing is precisely what has made OpenClaw compelling to individuals and alarming to enterprise security teams in equal measure.

As documented by CrowdStrike, misconfigured OpenClaw instances can be used as backdoor agents by adversaries.2 These can be used to leak data or conduct lateral movement via malicious instructions in emails and webpages. Instances have been found connected to enterprise systems such as Salesforce, GitHub, and Slack.3

Cisco found that a malicious third party OpenClaw skills could perform silent data exfiltration and prompt injection without user awareness.4 Researchers cited by Infosecurity Magazine identified over 40,000 publicly exposed instances, with 63% found to be vulnerable5, and a single security audit of the codebase identified 512 vulnerabilities, eight classified as critical.6

Much of the public debate around OpenClaw has focused on prompt injection and the risks of autonomous behaviour. But autonomy is also the source of the value these systems deliver.The more an agent can act independently across systems, the more it can unlock.

The question is not whether to constrain autonomy, but where and how to govern it. In enterprise deployments, that question has a structural answer.

When Aggregation Becomes Amplification

To ground this in a concrete case: at AIQURIS, we conducted a Risk+ inherent risk snapshot on an OpenClaw-based internal operations assistant. The agent was configured to retrieve and synthesise information across HR systems, case management tools, investigation records, a ticketing platform, and an internal knowledge base. In enterprise AI deployments, attention typically focuses on several well known risks:

- Hallucination, where AI models generate plausible but incorrect outputs

- prompt injection vulnerabilities

- Gaps in autonomy control

All are legitimate concerns.

However, the primary risk identigied in our assessment was none of these.

The central exposure was authorisation drift across the RAG (Retrieval-Augmented Generation) retrieval pipeline. This is a structural weakness that emerges when the the access controls of individual source systems become misaligned with the abstraction layer created when those systems are aggregated for retrieval.

Authorisation drift occurs when the access-control semantics of source systems are not preserved with fidelity across ingestion, indexing, retrieval, and response assembly layers.

Understanding this requires looking at how a RAG-based agent operates in an enterprise context. Each connected system such as HR, investigations, security, legal or ticketing operates with its own access-control semantics. Permissions are often highly granular. They may be role-based, case-specific, jurisdictionally scoped, or tied to temporary assignment.

For example, a user authorised to view an open investigation record is not necessarily authorised to view the associated HR disciplinary file, even if both systems are connected to the agent.

When a RAG agent aggregates across these domains, it introduces an abstraction layer between the user and the underlying systems. That layer handles ingestion, indexing, retrieval, and response assembly.

If permission controls are not enforced with exact fidelity at every stage of this pipeline, permission drift can accumulate.7 Traditional role-based access controls were not designed for this retrieval model, and access controls in AI systems cannot straightforwardly mirror the custom permission logic of underlying source systems.8

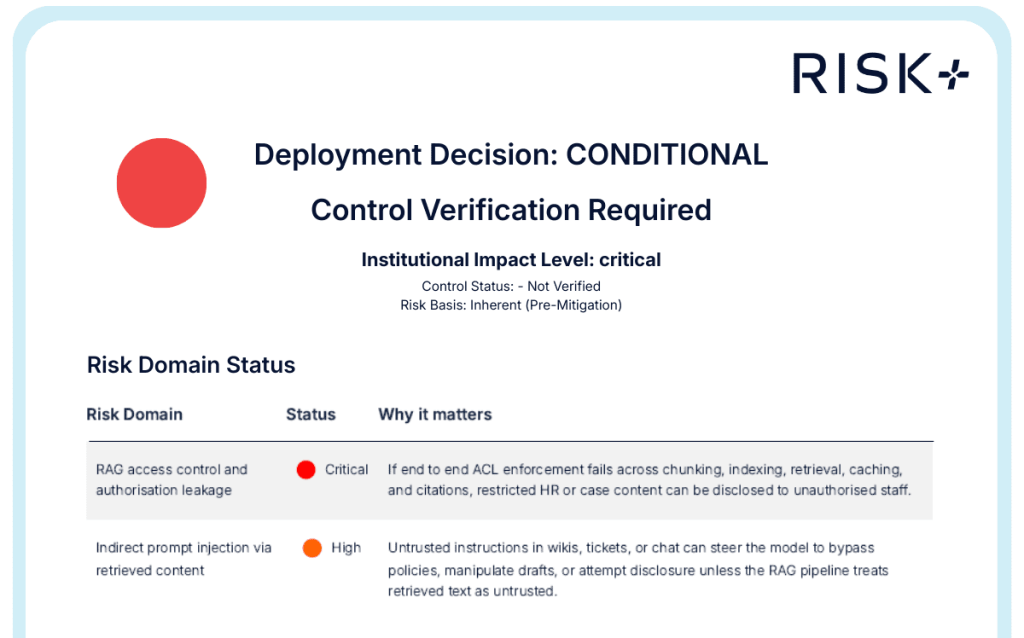

What the Risk+ Assessment Found

In the AIQURIS Risk+ assessment, three of the five connected repositories showed inconsistent permission semantics at the indexing layer.

Indexed metadata and summary fields from restricted records were retrievable in contexts where direct system access would have denied visibility. The agent was not behaving maliciously. The model was not misaligned. The prompts were not adversarial. The architecture simply did not preserve permission fidelity across the aggregation boundary.

As a result, the agent could surface information that no single source system would have exposed to the user directly. The exposure did not arise from malicious intent. It emerged because architectural enforcement no longer mirrored source reality. This dynamic has been independently observed in deployed enterprise agent environments. Sombrainc notes that vulnerabilities in RAG data layers are increasingly becoming the weakest link in enterprise AI security, precisely because the failure mode emerges from system architecture rather than from model behaviour.9

Authorisation drift was the primary finding, but not the only one.

Additional high severity exposures included:

- indirect prompt injection via retrieved content

- over-disclosure of personal data in outputs and drafts

- absence of AI-specific release gates

Taken together, these findings indicated that the deployment was not ready for high-sensitivity repositories without verified controls in place.

The overall deployment decision was therefore Conditional, requiring control verification before go-live.

Why This Risk Concentrates in High-Sensitivity Domains

In lower-sensitivity contexts, the practical impact of this kind of drift may be limited.

In high-sensitivity domains dsuch as disciplinary matters, active investigations, regulated casework and security incidents, the consequences scale rapidly. The agent effectively becomes a cross-domain access broker. Where permission enforcement is imperfect, it does not merely expose information from one system; it amplifies existing inconsistencies across all connected systems simultaneously.

This is not primarily a question of policy intent. Policies define who should have access. Technical enforcement determines who actually does.

Confidentiality breaches in these contexts can create regulatory exposure under GDPR and sector-specific data protection regimes where unauthorised internal disclosure may constitute a reportable breach.

RAG systems that do not enforce granular, attribute-based access controls can allow queries to retrieve documents beyond a user’s authorised scope, a structural risk increasingly flagged as a structural gap in enterprise AI deployments.10 Beyond regulatory exposure, such failures can also erode the internal trust that sensitive organisational workflows depend on. Particularly in HR and investigations contexts where perceived fairness depends on information being rigorously controlled.

From Model Safety to System Integrity

The conversation that OpenClaw has sparked is valuable. But the debate around enterprise agent platforms needs to move beyond model behaviour and prompt safety.

Authorisation drift is not an implementation error in isolation.It is a structural property of aggregation when enforcement fidelity is not engineered end to end.

Ingestion, indexing, retrieval, and response assembly are four distinct enforcement points, each of which can drift independently. The OWASP LLM Top 10 identifies unsecured RAG pipelines, vector and embedding weaknesses, and excessive agency among the highest-priority risks in enterprise LLM deployments.11 This classification reflects the fact that these exposures are structurally embedded rather than being incidental to any particular platform.

Security research into deployed OpenClaw instances corroborates this: shared global context across users, unsandboxed tool execution, and external content injection paths have all been documented as failure modes arising from architectural decisions rather than model behaviour.12

OpenClaw is not inherently unsafe for enterprise use. Neither is any other RAG-based agent platform. The risk is not inherent to the model or the platform. Rather, it is a function of architectural alignment and whether authorisation integrity is preserved across system boundaries.

The AIQURIS Risk+ snapshot assessed inherent exposure before mitigation. Residual risk depends on how effectively controls are engineered and verified across the full architecture, including not only the permission layer, but also audit and monitoring, connector governance, data minimisation at indexing, response filtering, and incident response capability. Addressing authorisation drift is necessary. However, it is not sufficient on its own.

Alignment must be engineered, tested, and continuously verified. Architectures evolve over time. Connectors change, repositories are added andthe permission semantics shift. So, verification cannot be a one-time exercise.

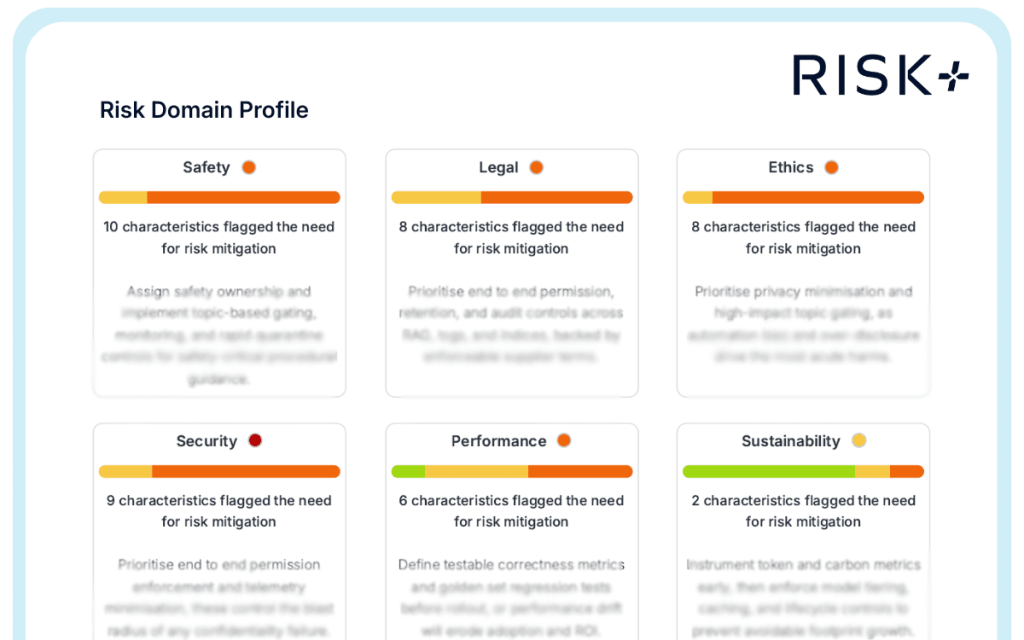

As the illustration of the assessment demonstrates, a Risk+ snapshot identifies inherent exposure at a point in time. However,keeping pace with how that exposure changes as a system matures is a different challenge. This requires risk management to be embedded in the operational lifecycle rather than applied at its edges. This is the problem that ongoing control verification and lifecycle risk management are designed to address. AIQURIS Control+ operationalises this discipline across the enterprise AI stack.

Sources

- Wikipedia. 2026. “OpenClaw.” Last modified February 2026. https://en.wikipedia.org/wiki/OpenClaw ↩︎

- CrowdStrike. 2026. “What Security Teams Need to Know About OpenClaw, the AI Super Agent.” February. https://www.crowdstrike.com/en-us/blog/what-security-teams-need-to-know-about-openclaw-ai-super-agent/ ↩︎

- Help Net Security. 2026. “OpenClaw Scanner: Open-Source Tool Detects Autonomous AI Agents.” February. https://www.helpnetsecurity.com/2026/02/12/openclaw-scanner-open-source-tool-detects-autonomous-ai-agents/ ↩︎

- Cisco AI Threat and Security Research. 2026. “Personal AI Agents like OpenClaw Are a Security Nightmare.” January. https://blogs.cisco.com/ai/personal-ai-agents-like-openclaw-are-a-security-nightmare ↩︎

- Infosecurity Magazine. 2026. “Researchers Find 40,000+ Exposed OpenClaw Instances.” February. https://www.infosecurity-magazine.com/news/researchers-40000-exposed-openclaw/ ↩︎

- Kaspersky. 2026. “New OpenClaw AI Agent Found Unsafe for Use.” February. https://www.kaspersky.com/blog/openclaw-vulnerabilities-exposed/55263/ ↩︎

- Daxa AI. 2025. “Secure Retrieval-Augmented Generation (RAG) in Enterprise Environments.” August. https://www.daxa.ai/blogs/secure-retrieval-augmented-generation-rag-in-enterprise-environments ↩︎

- IronCore Labs. n.d. “Security Risks with RAG Architectures.” https://ironcorelabs.com/security-risks-rag/ ↩︎

- Sombrainc. 2026. “LLM Security Risks in 2026: Prompt Injection, RAG, and Shadow AI.” January. https://sombrainc.com/blog/llm-security-risks-2026 ↩︎

- Proofpoint. 2025. “What Is RAG (Retrieval-Augmented Generation)?” October. https://www.proofpoint.com/us/threat-reference/retrieval-augmented-generation-rag ↩︎

- Barracuda Networks. 2025. “OWASP Top 10 Risks for Large Language Models: 2025 Updates.” November. https://blog.barracuda.com/2024/11/20/owasp-top-10-risks-large-language-models-2025-updates ↩︎

- Giskard. 2026. “OpenClaw Security Issues Include Data Leakage and Prompt Injection.” February. https://www.giskard.ai/knowledge/openclaw-security-vulnerabilities-include-data-leakage-and-prompt-injection-risks ↩︎